Posted by Thomas Ezan, Sr Developer Relation Engineer

1000’s of builders throughout the globe are harnessing the ability of the Gemini 1.5 Professional and Gemini 1.5 Flash fashions to infuse superior generative AI options into their purposes. Android builders aren’t any exception, and with the upcoming launch of the secure model of VertexAI in Firebase in a couple of weeks (out there in Beta since Google I/O), it is the proper time to discover how your app can profit from it. We simply revealed a codelab that can assist you get began.

Let’s deep dive into some superior capabilities of the Gemini API that transcend easy textual content prompting and uncover the thrilling use circumstances they’ll unlock in your Android app.

Shaping AI conduct with system directions

System directions function a “preamble” that you just incorporate earlier than the person immediate. This permits shaping the mannequin’s conduct to align along with your particular necessities and eventualities. You set the directions whenever you initialize the mannequin, after which these directions persist by way of all interactions with the mannequin, throughout a number of person and mannequin turns.

For instance, you should use system directions to:

- Outline a persona or function for a chatbot (e.g, “clarify like I’m 5”)

- Specify the response to the output format (e.g., Markdown, YAML, and so forth.)

- Set the output type and tone (e.g, verbosity, formality, and so forth…)

- Outline the targets or guidelines for the duty (e.g, “return a code snippet with out additional rationalization”)

- Present extra context for the immediate (e.g., a data cutoff date)

To make use of system directions in your Android app, move it as parameter whenever you initialize the mannequin:

val generativeModel = Firebase.vertexAI.generativeModel( modelName = "gemini-1.5-flash", ... systemInstruction = content material { textual content("You're a educated tutor. Reply the questions utilizing the socratic tutoring technique.") } )

You’ll be able to be taught extra about system instruction within the Vertex AI in Firebase documentation.

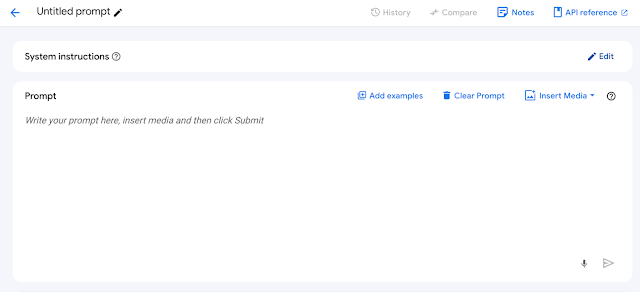

You can too simply check your immediate with completely different system directions in Vertex AI Studio, Google Cloud console device for quickly prototyping and testing prompts with Gemini fashions.

If you end up able to go to manufacturing it’s endorsed to focus on a particular model of the mannequin (e.g. gemini-1.5-flash-002). However as new mannequin variations are launched and former ones are deprecated, it’s suggested to make use of Firebase Distant Config to have the ability to replace the model of the Gemini mannequin with out releasing a brand new model of your app.

Past chatbots: leveraging generative AI for superior use circumstances

Whereas chatbots are a preferred utility of generative AI, the capabilities of the Gemini API transcend conversational interfaces and you’ll combine multimodal GenAI-enabled options into numerous points of your Android app.

Many duties that beforehand required human intervention (corresponding to analyzing textual content, picture or video content material, synthesizing information right into a human readable format, participating in a inventive course of to generate new content material, and so forth… ) could be doubtlessly automated utilizing GenAI.

Gemini JSON assist

Android apps don’t interface properly with pure language outputs. Conversely, JSON is ubiquitous in Android improvement, and gives a extra structured means for Android apps to devour enter. Nevertheless, making certain correct key/worth formatting when working with generative fashions could be difficult.

With the final availability of Vertex AI in Firebase, carried out options to streamline JSON era with correct key/worth formatting:

Response MIME sort identifier

When you have tried producing JSON with a generative AI mannequin, it is seemingly you could have discovered your self with undesirable additional textual content that makes the JSON parsing more difficult.

e.g:

Positive, right here is your JSON: ``` { "someKey”: “someValue", ... } ```

When utilizing Gemini 1.5 Professional or Gemini 1.5 Flash, within the era configuration, you possibly can explicitly specify the mannequin’s response mime/sort as utility/json and instruct the mannequin to generate well-structured JSON output.

val generativeModel = Firebase.vertexAI.generativeModel( modelName = "gemini-1.5-flash", … generationConfig = generationConfig { responseMimeType = "utility/json" } )

Overview the API reference for extra particulars.

Quickly, the Android SDK for Vertex AI in Firebase will allow you to outline the JSON schema anticipated within the response.

Multimodal capabilities

Each Gemini 1.5 Flash and Gemini 1.5 Professional are multimodal fashions. It implies that they’ll course of enter from a number of codecs, together with textual content, photos, audio, video. As well as, they each have lengthy context home windows, able to dealing with as much as 1 million tokens for Gemini 1.5 Flash and a couple of million tokens for Gemini 1.5 Professional.

These options open doorways to modern functionalities that had been beforehand inaccessible corresponding to robotically generate descriptive captions for photos, determine subjects in a dialog and generate chapters from an audio file or describe the scenes and actions in a video file.

You’ll be able to move a picture to the mannequin as proven on this instance:

val contentResolver = applicationContext.contentResolver contentResolver.openInputStream(imageUri).use { stream -> stream?.let { val bitmap = BitmapFactory.decodeStream(stream) // Present a immediate that features the picture specified above and textual content val immediate = content material { picture(bitmap) textual content("How many individuals are on this image?") } } val response = generativeModel.generateContent(immediate) }

You can too move a video to the mannequin:

val contentResolver = applicationContext.contentResolver contentResolver.openInputStream(videoUri).use { stream -> stream?.let { val bytes = stream.readBytes() // Present a immediate that features the video specified above and textual content val immediate = content material { blob("video/mp4", bytes) textual content("What's within the video?") } val fullResponse = generativeModel.generateContent(immediate) } }

You’ll be able to be taught extra about multimodal prompting within the VertexAI for Firebase documentation.

Observe: This technique lets you move recordsdata as much as 20 MB. For bigger recordsdata, use Cloud Storage for Firebase and embody the file’s URL in your multimodal request. Learn the documentation for extra info.

Perform calling: Extending the mannequin’s capabilities

Perform calling lets you prolong the capabilities to generative fashions. For instance you possibly can allow the mannequin to retrieve info in your SQL database and feed it again to the context of the immediate. You can too let the mannequin set off actions by calling the capabilities in your app supply code. In essence, operate calls bridge the hole between the Gemini fashions and your Kotlin code.

Take the instance of a meals supply utility that’s occupied with implementing a conversational interface with the Gemini 1.5 Flash. Assume that this utility has a getFoodOrder(delicacies: String) operate that returns the listing orders from the person for a particular sort of delicacies:

enjoyable getFoodOrder(delicacies: String) : JSONObject { // implementation… }

Observe that the operate, to be usable to by the mannequin, must return the response within the type of a JSONObject.

To make the response out there to Gemini 1.5 Flash, create a definition of your operate that the mannequin will be capable to perceive utilizing defineFunction:

val getOrderListFunction = defineFunction( title = "getOrderList", description = "Get the listing of meals orders from the person for a outline sort of delicacies.", Schema.str(title = "cuisineType", description = "the kind of delicacies for the order") ) { cuisineType -> getFoodOrder(cuisineType) }

Then, whenever you instantiate the mannequin, share this operate definition with the mannequin utilizing the instruments parameter:

val generativeModel = Firebase.vertexAI.generativeModel( modelName = "gemini-1.5-flash", ... instruments = listOf(Instrument(listOf(getExchangeRate))) )

Lastly, whenever you get a response from the mannequin, verify within the response if the mannequin is definitely requesting to execute the operate:

// Ship the message to the generative mannequin var response = chat.sendMessage(immediate) // Verify if the mannequin responded with a operate name response.functionCall?.let { functionCall -> // Attempt to retrieve the saved lambda from the mannequin's instruments and // throw an exception if the returned operate was not declared val matchedFunction = generativeModel.instruments?.flatMap { it.functionDeclarations } ?.first { it.title == functionCall.title } ?: throw InvalidStateException("Perform not discovered: ${functionCall.title}") // Name the lambda retrieved above val apiResponse: JSONObject = matchedFunction.execute(functionCall) // Ship the API response again to the generative mannequin // in order that it generates a textual content response that may be exhibited to the person response = chat.sendMessage( content material(function = "operate") { half(FunctionResponsePart(functionCall.title, apiResponse)) } ) } // If the mannequin responds with textual content, present it within the UI response.textual content?.let { modelResponse -> println(modelResponse) }

To summarize, you’ll present the capabilities (or instruments to the mannequin) at initialization:

And when acceptable, the mannequin will request to execute the suitable operate and supply the outcomes:

You’ll be able to learn extra about operate calling within the VertexAI for Firebase documentation.

Unlocking the potential of the Gemini API in your app

The Gemini API gives a treasure trove of superior options that empower Android builders to craft actually modern and fascinating purposes. By going past primary textual content prompts and exploring the capabilities highlighted on this weblog submit, you possibly can create AI-powered experiences that delight your customers and set your app aside within the aggressive Android panorama.

Learn extra about how some Android apps are already beginning to leverage the Gemini API.

To be taught extra about AI on Android, try different assets we have now out there throughout AI on Android Highlight Week.

Use #AndroidAI hashtag to share your creations or suggestions on social media, and be part of us on the forefront of the AI revolution!

The code snippets on this weblog submit have the next license:

// Copyright 2024 Google LLC. // SPDX-License-Identifier: Apache-2.0